The specter of a significant regulatory recall now looms over Tesla's most advanced and controversial technology. The National Highway Traffic Safety Administration (NHTSA) has escalated its long-running probe into Tesla's Full Self-Driving (FSD) system, focusing specifically on its performance and safety during poor visibility conditions like rain, fog, and glare. This engineering analysis, a critical step before a potential recall, centers on whether Tesla's camera-only "vision" system and its associated driver-monitoring safeguards are adequate when the road ahead becomes obscured.

The Core of the Investigation: A System Under Stress

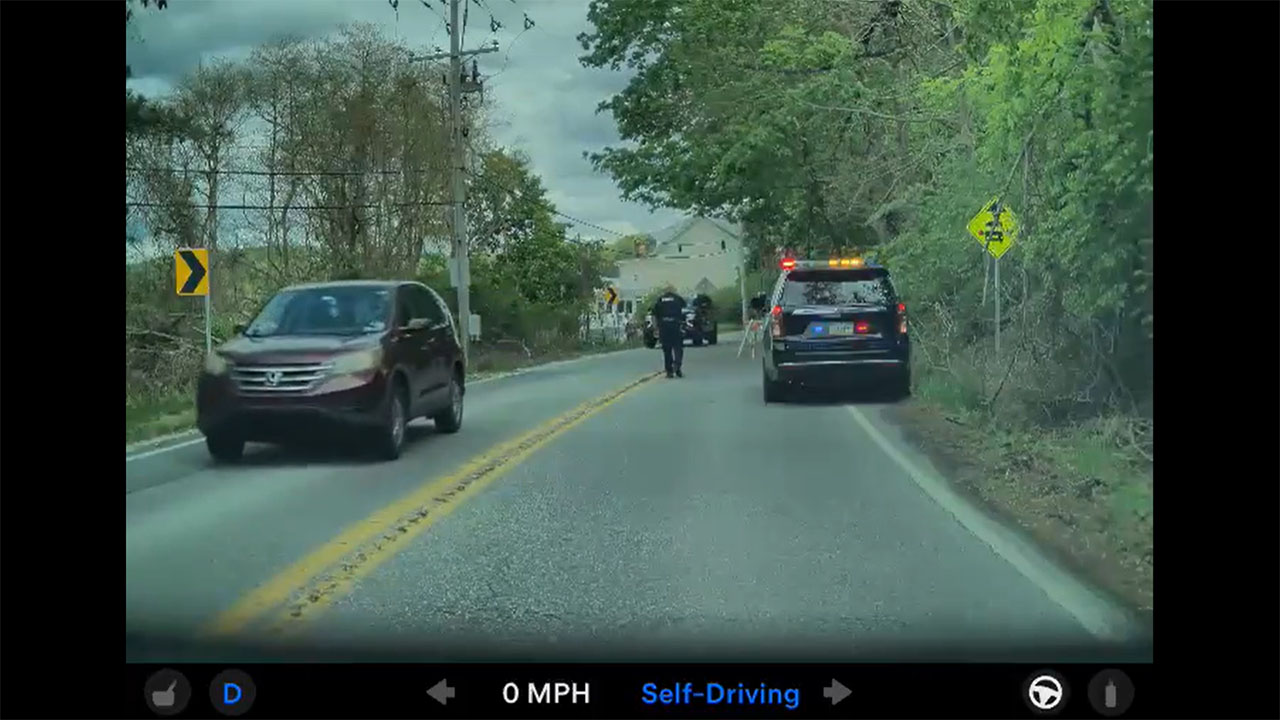

At the heart of the NHTSA's concerns is the system's behavior when its primary sensors—the cameras—are compromised. Tesla vehicles equipped with FSD utilize a "degradation detection" system designed to alert the driver to take immediate control when environmental conditions overwhelm the cameras. However, the investigation is scrutinizing whether these alerts are sufficient, timely, or even occur at all before a potential collision. The agency is examining 16 separate crash incidents, some involving injuries, where FSD may have been engaged during low-visibility scenarios, questioning the fundamental robustness of a vision-only approach in an imperfect world.

Vision-Only Philosophy Meets Regulatory Scrutiny

This expanded probe strikes directly at Tesla's core technological bet. While most other automakers developing advanced driver-assist systems incorporate radar and lidar as redundant sensors, Tesla has famously championed a pure "vision-based" architecture, arguing that humans drive using eyes and a brain, and that cameras paired with sophisticated AI can do the same. Regulators are now applying intense pressure to validate that claim under all conditions. The investigation will determine if this design philosophy introduces an unacceptable risk, especially if the vehicle's software fails to properly recognize its own limitations and ensure human driver engagement when needed.

The implications of this analysis extend beyond the specific crash incidents. It represents a rigorous, technical challenge to Tesla's entire development and deployment strategy for FSD, which has been rolled out to hundreds of thousands of owners on public roads under the "beta" label. A finding of defect by the NHTSA could force Tesla to implement more conservative operational limits, enhance its driver monitoring, or even retrofit vehicles—a monumental undertaking that would constitute one of the most complex software-centric recalls in automotive history.

For Tesla owners and investors, the escalating FSD investigation injects substantial uncertainty. A mandated recall, while not yet certain, could delay the company's timeline for achieving a truly autonomous system and impact the perceived value of the $12,000 (or $199/month) FSD package. It may also influence insurance costs and public perception of the technology's safety. Conversely, a resolution that satisfies regulators without major hardware changes could validate Tesla's vision-based path. In the immediate term, this development serves as a stark reminder that the driver, as the system is currently designed, remains legally and functionally responsible—a reality that must be heeded every time the FSD button is pressed, especially when the weather turns.