In a stark reminder that technology has not rewritten the law, a California man's attempt to use his Tesla's driver-assistance system as a designated driver has backfired spectacularly, resulting in a DUI arrest while he was asleep behind the wheel. The incident, which unfolded on a Northern California highway, underscores the persistent and dangerous gap between public perception of Autopilot capabilities and the legal reality that the human driver remains fully responsible.

The Limits of "Full Self-Driving"

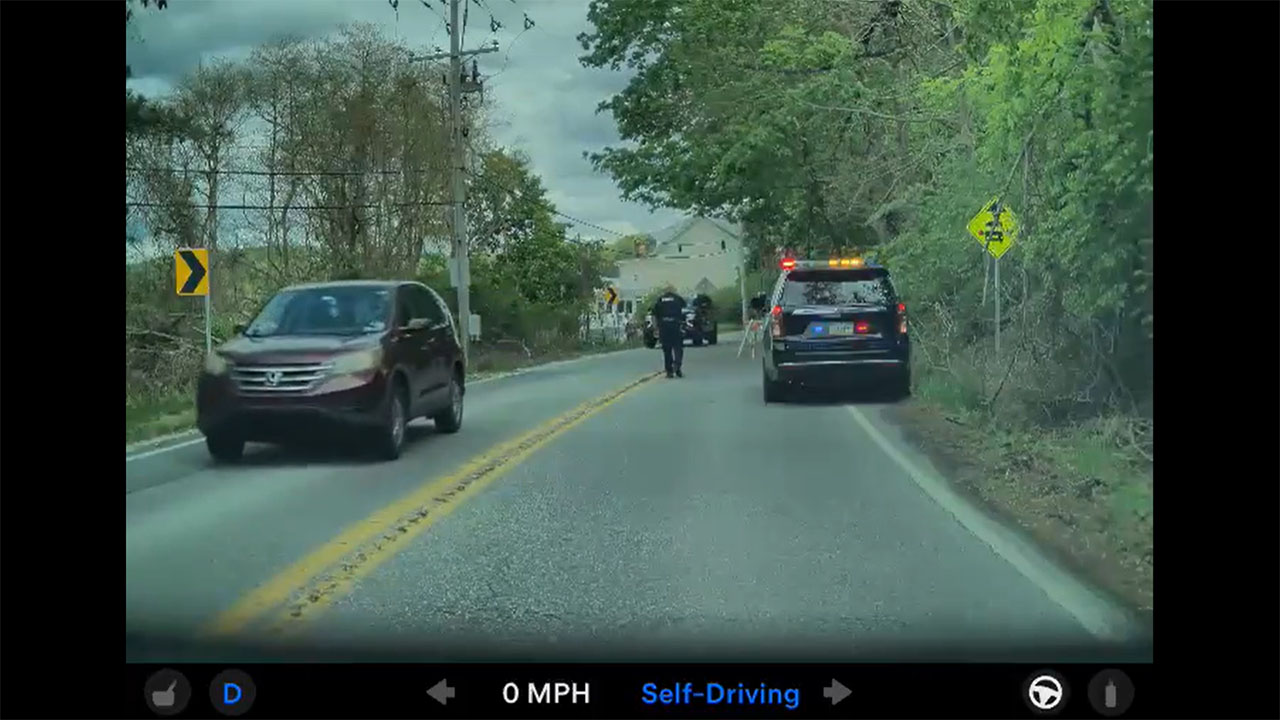

According to reports from the Vacaville Police Department, an officer observed a Tesla Model 3 traveling at approximately 55 mph on Interstate 80 with the driver appearing to be asleep. The vehicle was reportedly operating with Tesla's Full Self-Driving (FSD) beta software engaged. After following the car and confirming the driver was unconscious, law enforcement performed a maneuver to bring the Tesla to a controlled stop. Upon waking the 45-year-old driver, officers determined he was under the influence of alcohol. Despite the car navigating on its own, the driver was arrested for suspicion of DUI, as California law explicitly prohibits anyone from being drunk in a vehicle while in "actual physical control" of it—a status unaffected by automation engagement.

A Persistent and Dangerous Misconception

This case is not an isolated event but part of a troubling pattern where drivers conflate advanced driver-assistance systems with autonomous vehicle technology. Tesla's own warnings state that its systems require "active driver supervision" with hands on the wheel and full attention on the road, a directive clearly ignored in this scenario. The incident highlights a critical challenge for EV manufacturers and regulators: managing the behavioral risks created by over-trust in partially automated systems. For the broader electric vehicle industry, such high-profile misuse risks eroding public and regulatory trust in the gradual rollout of automated features.

The legal implications are unequivocal. Across the United States, DUI statutes are designed to govern the operation and control of a vehicle, not the state of its automation. A driver who is intoxicated and behind the wheel, regardless of whether a system is steering, is considered in operational control and therefore liable. This arrest sends a clear message to all Tesla owners: the car's advanced software is a driver-assistance feature, not a liability shield. Law enforcement agencies are increasingly trained to recognize the use of systems like Autopilot and to treat the human occupant as the responsible operator.

Implications for Tesla and Its Community

For Tesla investors, this incident represents a recurring reputational and regulatory headwind. Each event of driver misuse provides ammunition for critics and safety advocates calling for stricter oversight or limitations on the deployment of FSD technology. It reinforces the need for more robust, perhaps even intrusive, driver monitoring systems to combat complacency. For Tesla owners, the takeaway is a critical legal and safety warning. Relying on current-generation automation as a fail-safe for impaired driving is not only dangerously irresponsible but a guaranteed path to arrest, severe penalties, and potential tragedy. The vehicle's capability does not absolve the driver's legal responsibility.