The specter of regulatory scrutiny has intensified for Tesla's most advanced driver-assistance system. The National Highway Traffic Safety Administration (NHTSA) has escalated its long-running investigation into Tesla's Full Self-Driving (Supervised) software, moving it to an Engineering Analysis—a critical step closer to a potential recall. This upgrade follows the agency's discovery of additional concerning incidents, particularly those occurring in low-visibility conditions where the system's cameras may be compromised.

From Preliminary Evaluation to Engineering Analysis

NHTSA's Office of Defects Investigation initially opened a preliminary evaluation in August 2021, focusing on Tesla vehicles operating on Autopilot involved in collisions with stationary emergency vehicles. The probe has now broadened significantly. The shift to an Engineering Analysis, which covers an estimated 830,000 Tesla vehicles from the 2014-2023 model years, allows investigators to demand more data from the company and conduct rigorous testing. This phase is designed to determine the scope, frequency, and root causes of the identified defects, formally assessing whether they pose an unreasonable safety risk.

Low-Visibility Conditions Emerge as a Critical Weakness

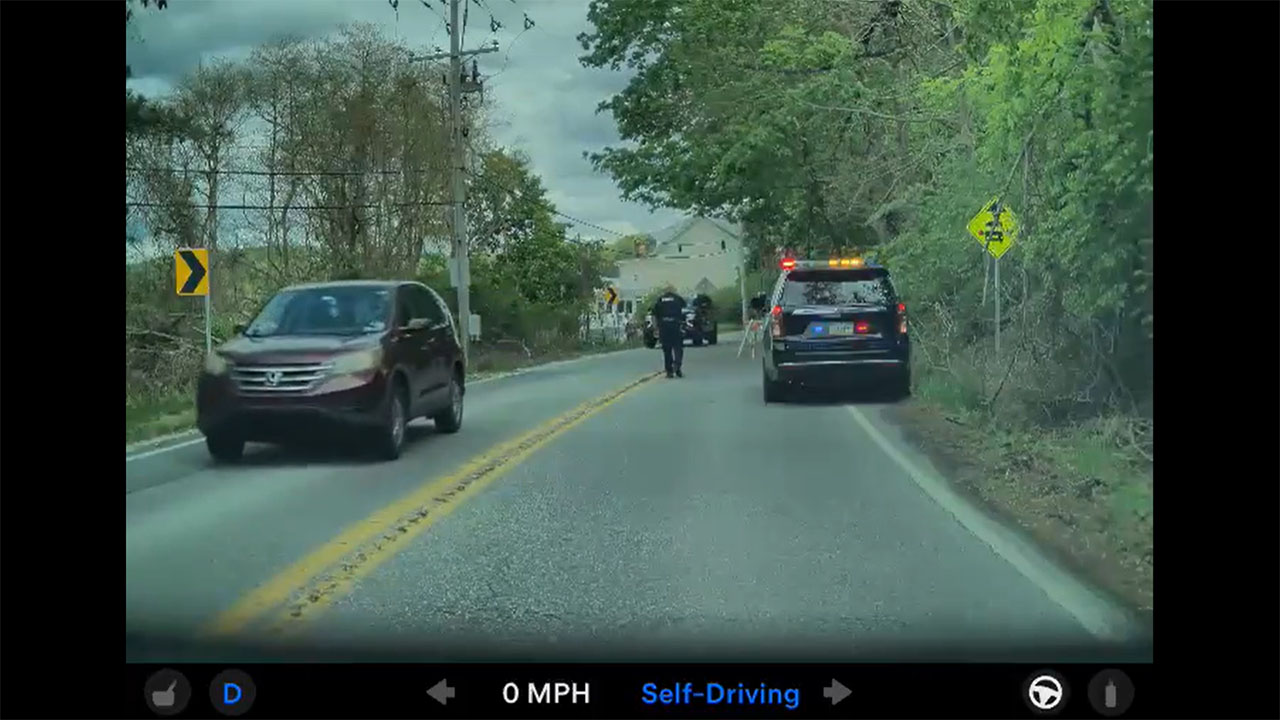

Central to the investigation's escalation are newly analyzed crashes and near-misses where FSD (Supervised) and Autopilot appeared to fail when confronted with poor lighting or obscured visual cues. The agency cited specific incidents where Teslas struck crossing vehicles, motorcycles, and parked cars in dawn, dusk, or shadowed environments. This pattern suggests a potential systemic limitation of Tesla's camera-centric "Tesla Vision" architecture, which lacks the supplemental sensor data from radar or lidar used by many other automakers. The system's performance at intersections, especially those without adequate overhead lighting, remains a key focus for regulators.

For Tesla, the timing of this intensified probe is particularly sensitive. The company is aggressively marketing its FSD capability, recently rolling out a free one-month trial to most eligible vehicles in North America and pushing for wider adoption of its "supervised" autonomy. This regulatory friction highlights the growing pains of deploying such advanced software at scale in the real world, where unpredictable weather, lighting, and infrastructure present constant challenges. Tesla's ability to address these edge cases through software updates will be under a microscope.

For Tesla owners and investors, the implications are twofold. Owners using FSD (Supervised) must remain hyper-vigilant, especially during sunrise, sunset, or in areas with stark shadows, understanding that the system is not designed for low-visibility scenarios. A potential recall, while not certain, could mandate a significant software modification that might alter the system's operational capabilities. For investors, the probe represents a persistent overhang, threatening both the near-term revenue potential of the high-margin FSD software and the long-term narrative of Tesla as the undisputed leader in autonomous driving technology. How the company navigates this heightened regulatory engagement will be a defining story for the year ahead.