In the relentless pursuit of full autonomy, Tesla's incremental software updates often focus on headline-grabbing capabilities like navigating complex intersections or handling highway merges. However, a recent social media post from CEO Elon Musk has spotlighted a seemingly minor, yet profoundly human-centric, advancement that underscores the system's growing sophistication. By highlighting that "Tesla self-driving now recognizes hand signals," Musk pointed to a feature that bridges the gap between AI logic and the nuanced, often unpredictable world of human road users.

The Subtle Art of Human-Centric AI

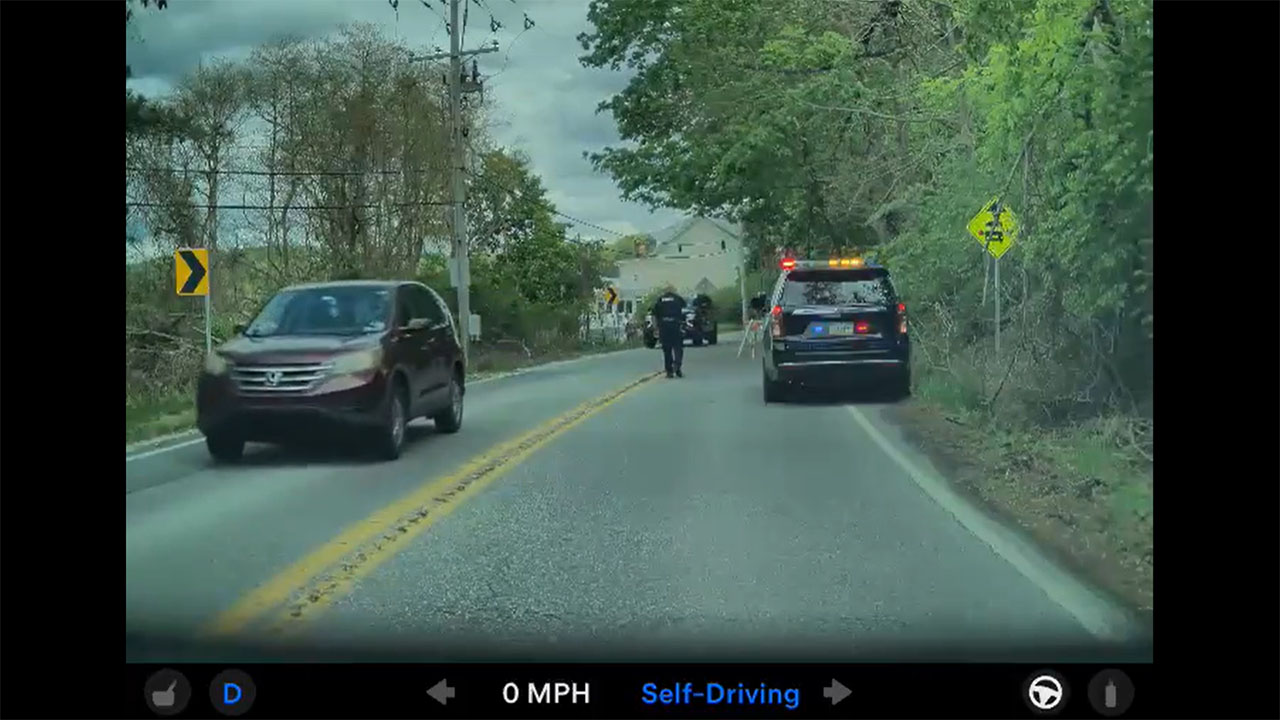

Recognizing a cyclist's extended arm or a construction worker's wave is a deceptively complex task for a neural network. It requires the system to not only identify a human form but also interpret intent and trajectory based on non-standardized, often ambiguous gestures. This move beyond rigid traffic laws and into the realm of behavioral prediction is a significant step. It means Tesla's Full Self-Driving (FSD) Supervised is being trained to understand the informal communication that keeps traffic flowing safely in real-world scenarios, where not every situation is covered by a painted line or a traffic light.

Beyond the Code: Context is King

This feature is far more than a parlor trick. In practical terms, it allows a Tesla using FSD to anticipate a cyclist's turn before they physically initiate it, or to yield respectfully to a pedestrian or worker directing traffic. It represents a layer of contextual awareness that is critical for achieving a safety profile exceeding human capability. By interpreting these organic signals, the EV transitions from merely following rules to actively participating in the social fabric of the road. This development directly addresses a common critique of autonomous systems: their potential rigidity in dynamic, human environments.

The implementation also speaks volumes about Tesla's data-driven development cycle. The ability to reliably recognize hand signals is almost certainly the result of training the neural network on millions of video clips from the fleet, capturing countless edge-case interactions. This continuous learning loop, where real-world data refines software, is a core competitive advantage for Tesla and a foundational element for achieving broader regulatory and public acceptance of autonomous driving technology.

For Tesla owners and investors, this underrated feature is a tangible indicator of progress. It demonstrates that development is advancing beyond core vehicular control and into the finer points of real-world integration. Every such enhancement improves the daily user experience, increases safety margins, and builds public trust. For investors, it validates the depth and scalability of Tesla's AI approach, suggesting the company is systematically solving the long-tail problems of autonomy. As these capabilities compound, they strengthen the value proposition of the FSD suite and the technological moat around the entire Tesla ecosystem.